|

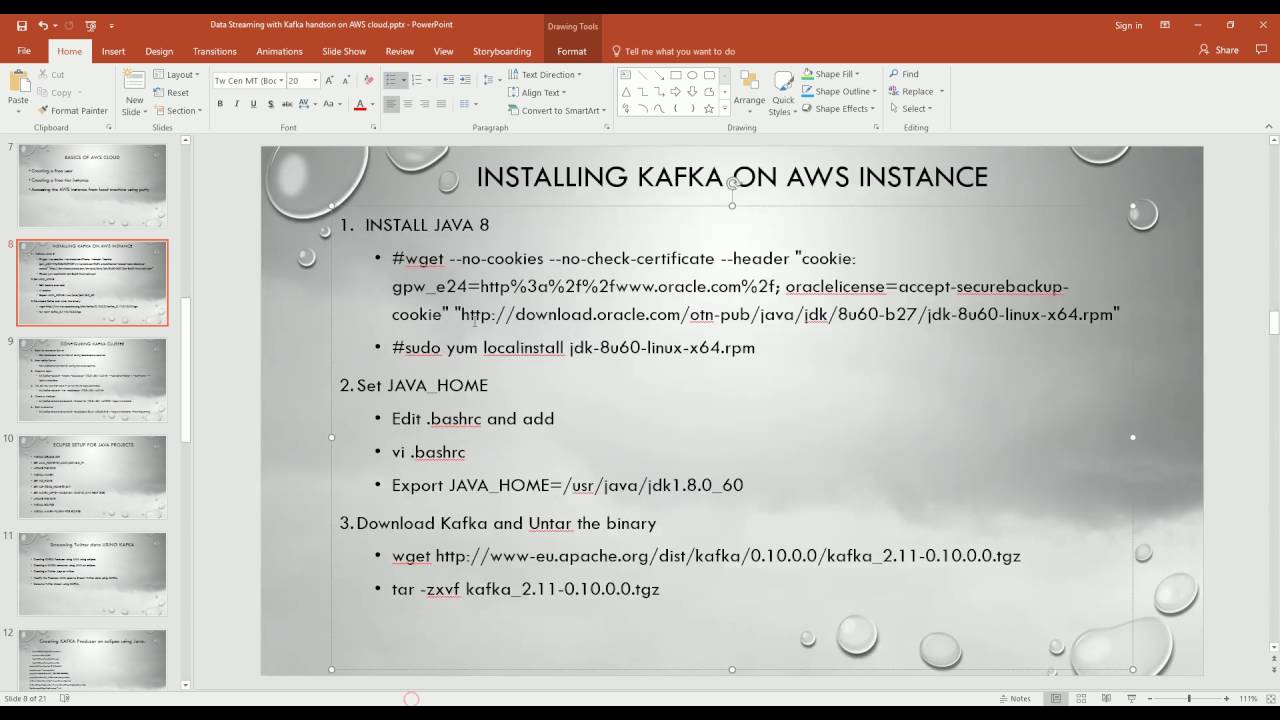

Install Kafka on Linux. Follow the steps below to install Kafka on Linux: Step 1. Download and extract Kafka binaries and store them in directories. Extract the archive you download using tar command. To configure Kafka go to server.properties. Open this file using nano command and add the following at the bottom of the file. If the mac users have Brew installed, they can use it for Kafka installation. There are following steps used to install Apache Kafka using brew: Step1: Use 'brew install kafka' and press enter key to install Kafka. Wait a while, and Kafka will be installed on the system. Step By Step: Getting Kafka installed in Mac OS X Sierra » Smartechie An Anchor to the cutting-edge tech. Getting Kafka installed in Mac OS X Sierra, homebrew, kafka, kafka in mac os, kafka setup in macOS Sierra, sudhirranjan. Above configuration file path may change based on your installtion. $ zookeeper-server-start /usr/local/etc/kafka/zookeeper.properties. Once the producer starts enter.

Kafka application development, debugging and the unit testing process is complicated. You might be set off for developing a Kafka producer, a consumer or a Kafka Streams application. In all cases, you need to install and configure at least four tools on your local machine.

Item 1, 3 and 4 are everyday activities for an experienced Java developer. However, installing a single node Kafka cluster on your local machine is a specific activity for the Kafka developer. Apache Kafka quick start is very well documented to start on Linux machine. So, your life is easy when you have a Linux or a Mac laptop with an IDE such as IntelliJ IDEA. However, a windows machine is a typical case for a lot of people. In this post, we will cover JDK and a single node Kafka installation on Windows 10 operating system. Installing JDK 1.8

Download Oracle JDK 1.8 latest update from Oracle tech network website. You may have to accept the license agreement and download an appropriate installer for your system type.

Apache Kafka Download And Install

Once you download the appropriate JDK 1.8 installer. Execute the Installer and follow the on-screen instructions. The installation process should be relatively straightforward. Installation wizard allows you to select the JDK installation location. You can keep the default value for the JDK installation location. However, remember the place because that is going to be your Java Home.

Once the JDK installation is complete, you must configure your PATH and JAVA_HOME environment variables. You can set up your environment variables using the following steps.

The final step is to test your JDK installation. Start windows command prompt and test JDK using below command.

The output should be something similar to below.

Configure and Test Kafka

Installing single node Apache Kafka cluster on Windows 10 is as straightforward as doing it on Linux. You can follow the steps defined below to run and test Kafka on Windows 10 operating system.

Configure Kafka for Windows 10

We also need to make some changes in the Kafka configurations.

Open kafka_2.12-2.0.0configserver.properties and change/add following configuration properties.

The Zookeeper and the Kafka data directories must already exist. So, make sure that the C:zookeeper_data and C:kafka_logs is there. You might have already learned all the above in the earlier section. The only difference is in topic default values. We are setting topic defaults to one, and that makes sense because we will be running a single node Kafka. Finally, add Kafka binwindows directory to the PATH environment variable. This directory contains a bunch of Kafka tools for the windows platform. We will be using some of those in the next section. Starting and Testing Kafka

Kafka needs Zookeeper, so we will start Zookeeper on your Windows 10 machine using below command.

Start a windows command prompt and execute the above command. It should start the zookeeper server. Minimize the command window and let the zookeeper running in that window.

Start a new command window and start Kafka Broker using below command.

The above command should start the single node Kafka Broker. Minimize the window and let the broker running in that window.

Now, you can test the Zookeeper and Kafka. Execute below command to test the Zookeeper and Kafka broker registration to the Zookeeper server.

You can create a new Kafka topic using below command.

You can list the available topics using below command.

You can start a console producer and send some messages using below command.

You can start a console consumer and check the messages that you sent using below command.

Summary

In this post, we learned Installing a single-node Kafka broker on your local machine running Windows 10 operating system. This installation will help you to execute your Kafka application code locally and help you debug your application from the IDE. In the next section, we will learn to configure and use an IDE for the Kafka development.

Read More

The Kafka Connect TIBCO Source connector is used to move messages from TIBCO Enterprise Messaging Service (EMS) to Apache Kafka®.

Messages are consumed from the TIBCO EMS broker using the configured message selectors and written to a single Kafka topic.A Kafka Connect Transformations can be used to route messages to multiple Kafka topics.

The connector currently supports consuming JMSTextMessage andBytesMessage but notObjectMessage orStreamMessage.

Note

If you are required to use the Java Naming and Directory Interface™ (JNDI) to connect to TIBCO EMS,there is a general JMS Source Connector available that uses aJNDI-based mechanism to connect to the JMS broker.

Prerequisites¶

The following are required to run the Kafka Connect TIBCO Source Connector:

Install the TIBCO Source Connector¶

You can install this connector by using the Confluent Hub client (recommended) or you canmanually download the ZIP file.

Install the connector using Confluent Hub¶

Navigate to your Confluent Platform installation directory and run the following command to install the latest (

latest) connector version. The connector must be installed on every machine where Connect will run.

You can install a specific version by replacing

latest with a version number. For example:

Install the connector manually¶

Download and extract the ZIP file for your connector and then follow the manual connector installation instructions.

License¶

You can use this connector for a 30-day trial period without a license key.

After 30 days, this connector is available under a Confluent enterprise license. Confluent issues enterprise license keys to subscribers, along with providing enterprise-level support for Confluent Platform and your connectors. If you are a subscriber, please contact Confluent Support at [email protected] for more information.

See Confluent Platform license for license properties and License topic configuration for information about the license topic.

Configuration Properties¶

For a complete list of configuration properties for this connector, see TIBCO Source Connector Configuration Properties.

Note

For an example of how to get Kafka Connect connected to Confluent Cloud, see Distributed Cluster in Connect Kafka Connect to Confluent Cloud.

TIBCO Client Library¶

The Kafka Connect TIBCO Source Connector does not come with the TIBCO JMS client library.

If you are running a multi-node Connect cluster, the connector and TIBCO JMSclient JAR must be installed on every Connect worker in the cluster. See below for details.

Installing TIBCO JMS Client Library¶

This connector relies on a provided

tibjms client JAR that is included in the TIBCO EMS installation.The connector will fail to create a connection to TIBCO EMS if you have not installed the JAR on each Connect worker node.

The installation steps are:

Note

The

share/java/kafka-connect-tibco-source directory mentioned above is for Confluent Platform.If you are using a different installation, find the location of the Confluent TIBCOSource Connector JAR files and place the tibjms JAR file into the same directory.

Schemas¶

The connector produces Kafka messages with keys and values that adhere to the schemas described in the following sections.

io.confluent.connect.jms.Key¶

This schema stores the incoming MessageID on the message interface.This ensures that if the same message ID arrives, which is unlikely, it will end up in the same Kafka partition.

The schema defines the following fields:

Mac Install Kafkaio.confluent.connect.jms.Value¶Download And Install Kafka In Mac Shortcut

This schema stores the value of the JMS message.

The schema defines the following fields:

io.confluent.connect.jms.Destination¶

This schema represents a JMS Destination, and is either queue or topic.

The schema defines the following fields:

io.confluent.connect.jms.PropertyValue¶

This schema stores the data found in the properties of the message. To ensure that type mappings are preserved,

propertyType stores the type of the field.The corresponding field in the schema will contain the data for the property. This ensures that the data is retrievable as the type returned by Message.getObjectProperty().

The schema defines the following fields:

Quick Start¶

This quick start uses the TIBCO Source Connector to consume records from TIBCO Enterprise Message Service™ - Community Edition and send them to Kafka.

Download And Install Kafka On Mac

Kafka Download WindowsAdditional Documentation¶Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2020

Categories |

RSS Feed

RSS Feed